#include <sys/types.h>

#include <stdio.h>

#include <unistd.h>

#define SIZE 5

int nums[SIZE] = { 0,1,2,3,4 } ;

int main() {

int i;

pid t pid;

pid = fork();

if (pid == 0) {

for (i = 0; i < SIZE; i++) {

nums[i] *= -i;

printf("CHILD: %d ",nums[i]); /* LINE X */

}

} else if (pid > 0) {

wait(NULL);

for (i = 0; i < SIZE; i++) {

printf("PARENT: %d ",nums[i]); /* LINE Y */

}

}

return 0;

}

News

Dates of the Exam - 06 March 2019

The ordinary exam in DM510 will be held June 17th-19th.

The external examiner speaks danish, so danish and english are both valid languages for the exam.

Facebook Group - 13 December 2018

There is a Facebook Group for informal discussions, semi-irrelevant announcements etc. Find it here

Weekly Notes

Here you will find recommended reading, what we expect to cover in the lecture, slides and material for the exercise sessions. The week numbers relate to the week of the lecture, and thus, any monday sessions is filed in the previous week.

Weekly Note 1 - Week 6 - Online from 15 January 2019

Lecture - Tuesday, February 5th.

16-19 in U82

We will start with an introduction to the course, discus the format of the lectures and tutorials, and expectations.

We will have an introduction to operating systems (Chapter 1), discussing the grand tour of the major components, describing the journey for this semester. I expect to cover chapter 1 fairly quickly, as it is primarily an introduction to the material in the rest of the course.

We will then turn the attention to chapter 2: System Structures, that describes the services an operating system provides, ways to structure an OS and installation and booting.

Tutorial session

Thursday February 7th. 8-10 in U166 or Friday February 8th. 8-10 in U166

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Operating-System Structures"

Prepare at home to discuss:

-

What is the main advantage of the layered approach to system design? What are the disadvantages of the layered approach?

-

What is the purpose of system calls?

-

What are the advantages and disadvantages of using the same system-call interface for manipulating both files and devices?

-

Describe three general methods for passing parameters to the operating system.

-

What are the five major activities of an operating system with regard to file management?

-

What are the two models of interprocess communication? What are the strengths and weaknesses of the two approaches?

-

It is sometimes difficult to achieve a layered approach if two components of the operating system are dependent on each other. Identify a scenario in which it is unclear how to layer two system components that require tight coupling of their functionalities.

-

What is the main advantage of the microkernel approach to system design? How do user programs and system services interact in a microkernel architecture? What are the disadvantages of using the microkernel approach?

In class:

Discus your list of keywords, and the excercises prepared at home.

For the remainder of the class, get started programming some C - i.e. work on the first assignment.

Recommended Reading

-

Chapter 1 and 2 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Course Intro Slides and as HTML

-

Slides for lecture 1 and as HTML

Weekly Note 2 - Week 7 - Online from 08 February 2019

Lecture - Tuesday, February 12th.

16-19 in U82

We will cover chapter 3: Processes and Chapter 4 on Threads & Concurrency. These two chapters will be the primary material for exam topic 2: "Processes, Threads and Concurrency".

We will have a small review of the previous lecture, so this time it will be chapter 1 and 2, and we might sneak in a Kahoot.

Please install the Kahoot app on your phone, if you wish to participate ;)

Tutorial session

Thursday February 14th. 8-10 in U31 or Friday February 15th. 12-14 in U155

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Processes, Threads and Concurrency"

Prepare at home to discuss:

-

Describe the differences among short-term, medium-term, and long-term scheduling.

-

Describe the actions taken by a kernel to context-switch between processes.

-

Explain the role of the init process on UNIX and Linux systems in regards to process termination.

-

Consider the RPC mechanism. Describe the undesirable consequences that could arise from not enforcing either the "at most once" or "exactly once" semantic. Describe possible uses for a mechanism that has neither of these guarantees.

-

Using the program shown in Figure below, explain what the output will be at lines X and Y.

-

Under what circumstances does a multithreaded solution using multiple kernel threads provide better performance than a single-threaded solution on a single-processor system?

-

Which of the following components of program state are shared across threads in a multithreaded process?

-

Register values

-

Heap memory

-

Global variables

-

Stack memory

-

-

Can a multithreaded solution using multiple user-level threads achieve better performance on a multiprocessor system than on a single processor system? Explain.

-

Is it possible to have concurrency but not parallelism? Explain.

-

A system with two dual-core processors has four processors available for scheduling. A CPU-intensive application is running on this system. All input is performed at program start-up, when a single file must be opened. Similarly, all output is performed just before the program terminates, when the program results must be written to a single le. Between startup and termination, the program is entirely CPU-bound. Your task is to improve the performance of this application by multi-threading it. The application runs on a system that uses the one-to-one threading model (each user thread maps to a kernel thread).

-

How many threads will you create to perform the input and output? Explain.

-

How many threads will you create for the CPU-intensive portion of the application? Explain.

-

-

Consider a multiprocessor system and a multithreaded program written using the many-to-many threading model. Let the number of user-level threads in the program be more than the number of processors in the system. Discuss the performance implications of the following scenarios.

-

The number of kernel threads allocated to the program is less than the number of processors.

-

The number of kernel threads allocated to the program is equal to the number of processors.

-

The number of kernel threads allocated to the program is greater than the num- ber of processors but less than the number of user-level threads.

-

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Processes, Threads and Concurrency"

In class:

Use the first 30 minutes to work on those exercises:

-

Including the initial parent process, how many processes are created by the program shown in the following program:

#include <stdio.h>

#include <unistd.h>

int main() {

/* fork a child process */

fork();

/* fork another child process */

fork();

/* and fork another */

fork();

return 0;

}

-

Explain the circumstances when the line of code marked printf("LINE J") in the Figure below is reached.

#include <sys/types.h>

#include <stdio.h>

#include <unistd.h>

int main() {

pid_t pid;

/* fork a child process */

pid = fork();

if (pid < 0) { /* error occurred */

fprintf(stderr, "Fork Failed");

return 1;

} else if (pid == 0) { /* child process */

execlp("/bin/ls","ls",NULL);

printf("LINE J");

} else { /* parent process */

/* parent will wait for the child to complete */

wait(NULL);

printf("Child Complete");

}

return 0;

}

-

What are the benefits and the disadvantages of each of the following? Consider both the system level and the programmer level.

-

Synchronous and asynchronous communication

-

Automatic and explicit buffering

-

Send by copy and send by reference

-

Fixed-sized and variable-sized messages

-

-

Using Amdahl’s Law, calculate the speedup gain of an application that has a 60 percent parallel component for (a) two processing cores and (b) four processing cores.

-

As described in Section 4.7.2, Linux does not distinguish between processes and threads. Instead, Linux treats both in the same way, allowing a task to be more akin to a process or a thread depending on the set of ags passed to the clone() system call. However, many operating systems, such as Windows XP and Solaris, treat processes and threads differently. Typically, such systems use a notation wherein the data structure for a process contains pointers to the separate threads belonging to the process. Contrast these two approaches for modeling processes and threads within the kernel.

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 3 and 4 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 2 and as HTML

Weekly Note 3 - Week 8 - Online from 13 February 2019

There will be no classes in week 9, due to constraints at work for Jacob (I’ll be in Macedonia). Thus no lecture February 26th or tutorials on February 28th. I’m sorry for the inconvenience this may cause.

Luckily, there is project work :) So you should be able to get far with the second project.

See you in week 10 where we will start the next big section of the course: Process Synchronization.

With this change, week 20 seems to be the last week with classes in DM510.

Lecture - Tuesday, February 19th.

16-19 in U82

We will cover chapter 5: CPU scheduling. This chapter will be the primary material for exam topic 3: "CPU scheduling".

The first projects have been handed in, so we will take a look at (annonymized) code snippets from the first round of the projects. We will be doing some code review and discussing style, naming etc., helping you become better in the fine craftmanship of programming.

We will have a small review of the previous lecture, so this time it will be chapter 3 and 4, and we might sneak in a Kahoot.

Tutorial session

Thursday February 20th. 8-10 in U31 or Friday February 21th. 12-14 in U155

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "CPU scheduling"

Prepare at home to discuss:

-

Why is it important for the scheduler to distinguish I/O-bound programs from CPU- bound programs?

-

Discuss how the following pairs of scheduling criteria conflict in certain settings.

-

CPU utilization and response time

-

Average turnaround time and maximum waiting time

-

I/O device utilization and CPU utilization

-

-

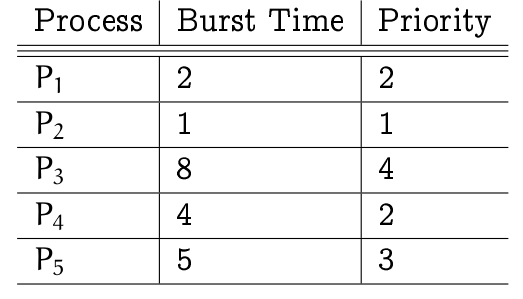

Consider the following set of processes, with the length of the CPU burst time given in milliseconds:

The processes are assumed to have arrived in the order P1 , P2 , P3, P4 , P5 all at time 0.-

Draw four Gantt charts that illustrate the execution of these processes using the following scheduling algorithms: FCFS, SJF, nonpreemptive priority (a smaller priority number implies a higher priority), and RR (quantum = 1).

-

What is the turnaround time of each process for each of the scheduling algorithms in part a?

-

What is the waiting time of each process for each of these scheduling algorithms?

-

Which of the algorithms results in the minimum average waiting time (over all processes)?

-

-

Which of the following scheduling algorithms could result in starvation?

-

First-come, first-served

-

Shortest job first

-

Round robin

-

Priority

-

-

Consider a variant of the RR scheduling algorithm where the entries in the ready queue are pointers to the PCBs.

-

What would be the effect of putting two pointers to the same process in the ready queue?

-

What would be two major advantages and disadvantages of this scheme?

-

How would you modify the basic RR algorithm to achieve the same effect without the duplicate pointers?

-

-

Consider a system running ten I/O-bound tasks and one CPU-bound task. Assume that the I/O-bound tasks issue an I/O operation once for every millisecond of CPU computing and that each I/O operation takes 10 milliseconds to complete. Also assume that the context-switching overhead is 0.1 millisecond and that all processes are long-running tasks. Describe is the CPU utilization for a round-robin scheduler when:

-

The time quantum is 1 millisecond

-

The time quantum is 10 milliseconds

-

-

Explain the differences in how much the following scheduling algorithms discriminate in favor of short processes:

-

FCFS

-

RR

-

Multilevel feedback queues

-

In class:

Use the first 30 minutes to work on those exercises:

-

In this chapter, we discussed possible race conditions on various kernel data structures. Most scheduling algorithms maintain a run queue, which lists processes eligible to run on a processor. On multicore systems, there are two general options: (1) each processing core has its own run queue, or (2) a single run queue is shared by all processing cores. What are the advantages and disadvantages of each of these approaches?

-

Consider the exponential average formula used to predict the length of the next CPU burst. What are the implications of assigning the following values to the parameters used by the algorithm?

-

α = 0 and τ0 = 100 milliseconds

-

α = 0.99 and τ0 = 10 milliseconds

-

-

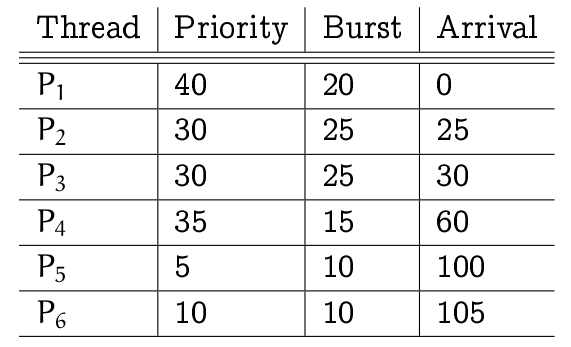

The following processes are being scheduled using a preemptive, round-robin scheduling algorithm. Each process is assigned a numerical priority, with a higher number indicating a higher relative priority. In addition to the processes listed below, the system also has an idle task (which consumes no CPU resources and is identified as Pidle). This task has priority 0 and is scheduled whenever the system has no other availableprocesses to run. The length of a time quantum is 10 units. If a process is preempted by a higher-priority process, the preempted process is placed at the end of the queue.

-

Show the scheduling order of the processes using a Gantt chart.

-

What is the turnaround time for each process?

-

What is the waiting time for each process?

-

What is the CPU utilization rate?

-

-

Consider a system implementing multilevel queue scheduling. What strategy can a computer user employ to maximize the amount of CPU time allocated to the user’s process?

-

Consider a preemptive priority scheduling algorithm based on dynamically changing priorities. Larger priority numbers imply higher priority. When a process is waiting for the CPU (in the ready queue, but not running), its priority changes at a rate α ; when it is running, its priority changes at a rate β . All processes are given a priority of 0 when they enter the ready queue. The parameters α and β can be set to give many different scheduling algorithms.

-

What is the algorithm that results from β > α > 0?

-

What is the algorithm that results from α < β < 0?

-

-

Assume that two tasks A and B are running on a Linux system. The nice values of A and B are -5 and +5, respectively. Using the CFS scheduler as a guide, describe how the respective values of vruntime vary between the two processes given each of the following scenarios:

-

Both A and B are CPU-bound.

-

A is I/O-bound, and B is CPU-bound.

-

A is CPU-bound, and B is I/O-bound.

-

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 5 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 3 and as HTML

Weekly Note 4 - Week 9 - Online from 23 February 2019

Lecture

No lecture this week

Tutorial session

No tutorial session this week

Weekly Note 5 - Week 10 - Online from 23 February 2019

Lecture - Tuesday, March 5th.

16-19 in U82

We will cover chapter 6 and 7 on Synchronization (Tools and Examples).

We will have a small review of the previous lecture, so this time it will be chapter 5, and we might sneak in a Kahoot.

Tutorial session

Thursday March 7th. 8-10 in U143 or Friday March 8th. 8-10 in U30

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Synchronization"

Prepare at home to discuss:

-

Race conditions are possible in many computer systems. Consider a banking system with two methods: deposit(amount) and withdraw(amount). These two methods are passed the amount that is to be deposited or withdrawn from a bank account. Assume that a husband and wife share a bank account and that concurrently the husband calls the withdraw() method and the wife calls deposit(). Describe how a race condition is possible and what might be done to prevent the race condition from occurring.

-

The first known correct software solution to the critical-section problem for two processes was developed by Dekker. The two processes, P 0 and P 1 , share the following variables:

boolean flag[2]; /* initially false */ int turn;

The structure of process Pi (i = 0 or 1) is shown in the Figure below; the other process is Pj, (j = 1 or 0). Prove that the algorithm satisfies all three requirements for the critical-section problem.

do {

flag[i] = true;

while (flag[j]) {

if (turn == j) {

flag[i] = false;

while (turn == j) {

; /* do nothing */

}

flag[i] = true;

}

}

/* critical section */

turn = j;

flag[i] = false;

/* remainder section */

} while (true);-

Explain why implementing synchronization primitives by disabling interrupts is not appropriate in a single-processor system if the synchronization primitives are to be used in user-level programs.

-

Explain why interrupts are not appropriate for implementing synchronization primitives in multiprocessor systems.

-

The Linux kernel has a policy that a process cannot hold a spinlock while attempting to acquire a semaphore. Explain why this policy is in place.

-

Describe two kernel data structures in which race conditions are possible. Be sure to include a description of how a race condition can occur.

-

Assume that a system has multiple processing cores. For each of the following scenarios, describe which is a better locking mechanism-a spinlock or a mutex lock where waiting processes sleep while waiting for the lock to become available:

-

The lock is to be held for a short duration.

-

The lock is to be held for a long duration.

-

The thread may be put to sleep while holding the lock.

-

-

Assume a context switch takes T time. Suggest an upper bound (in terms of T ) for holding a spin lock and that if the spin lock is held for any longer duration, a mutex lock (where waiting threads are put to sleep) is a better alternative.

-

Demonstrate that monitors and semaphores are equivalent insofar as they can be used to implement the same types of synchronization problems.

-

Discuss the tradeoff between fairness and throughput of operations in the readers-writers problem. Propose a method for solving the readers-writers problem without causing starvation.

-

How does the signal() operation associated with monitors differ from the corresponding operation defined for semaphores?

In class:

Use the first 30 minutes to work on those exercises:

-

The first known correct software solution to the critical-section problem for n processes with a lower bound on waiting of n − 1 turns was presented by Eisenberg and McGuire. The processes share the following variables:

enum pstate {idle, want_in, in_cs};

pstate flag[n];

int turn;

All the elements of flag are initially idle; the initial value of turn is immaterial (between 0 and n − 1 ). The structure of process Pi is shown in the Figure below. Prove that the algorithm satisfies all three requirements for the critical-section problem.

do {

while (true) {

flag[i] = want in;

j = turn;

while (j != i) {

if (flag[j] != idle) {

j = turn;

} else {

j = (j + 1) % n;

}

flag[i] = in cs;

j = 0;

while ( (j < n) && (j == i || flag[j] != in cs)) {

j++;

}

if ( (j >= n) && (turn == i || flag[turn] == idle)) {

break;

}

}

/* critical section */

j = (turn + 1) % n;

while (flag[j] == idle) {

j = (j + 1) % n;

}

turn = j;

flag[i] = idle;

/* remainder section */

} while (true);-

Consider how to implement a mutex lock using an atomic hardware instruction. Assume that the following structure defining the mutex lock is available:

typedef struct {

int available;

} lock;

where ( available == 0 ) indicates the lock is available; a value of 1 indicates the lock is unavailable. Using this struct, illustrate how the following functions may be implemented using the test_and_set() and compare_and_swap() instructions.

void acquire(lock *mutex)

void release(lock *mutex)

Be sure to include any initialization that may be necessary.

-

Consider the code example for allocating and releasing processes shown in the Figure below.

#define MAX PROCESSES 255

int number of processes = 0;

/* the implementation of fork() calls this function */

int allocate process() {

int new pid;

if (number of processes == MAX PROCESSES) {

return -1;

} else {

/* allocate necessary process resources */

++number of processes;

return new pid;

}

}

/* the implementation of exit() calls this function */

void release process() {

/* release process resources */

--number of processes;

}

-

Identify the race condition(s).

-

Assume you have a mutex lock named mutex with the operations acquire() and release(). Indicate where the locking needs to be placed to prevent the race condition(s).

-

Could we replace the integer variable

int number_of_processes = 0

with the atomic integer

atomic_t number_of_processes = 0to prevent the race condition(s)?

-

Suppose we replace the

wait()andsignal()operations of monitors with a single constructawait(B), where B is a general Boolean expression that causes the process executing it to wait until B becomes true.-

Write a monitor using this scheme to implement the readers-writers problem.

-

Explain why, in general, this construct cannot be implemented efficiently.

-

Why is it important for the scheduler to distinguish I/O-bound programs from CPU- bound programs?

-

-

Describe how the

compare_and_swap()(not described in detail in the lecture) instruction can be used to-

provide mutual exclusion

-

provide mutual exclusion that satisfies the bounded-waiting requirement.

-

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 6 and 7 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 4 and as HTML

Weekly Note 6 - Week 11 - Online from 06 March 2019

Lecture - Tuesday, March 12th.

16-19 in U82

We will cover chapter 8 on deadlocks, and study how to detect and handle them. This chapter will be the primary material for exam topic 5: "Deadlocks".

We will have a small review of the previous lecture, so this time it will be chapters 6 and 7, and we probably sneak in a Kahoot.

I will bring some feedback and review of project 2, and we will do the mid-term evaluation.

Tutorial session

Thursday March 14th. 14-16 in U156 or Friday March 15th. 8-10 in U82

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Deadlocks"

Prepare at home to discuss:

-

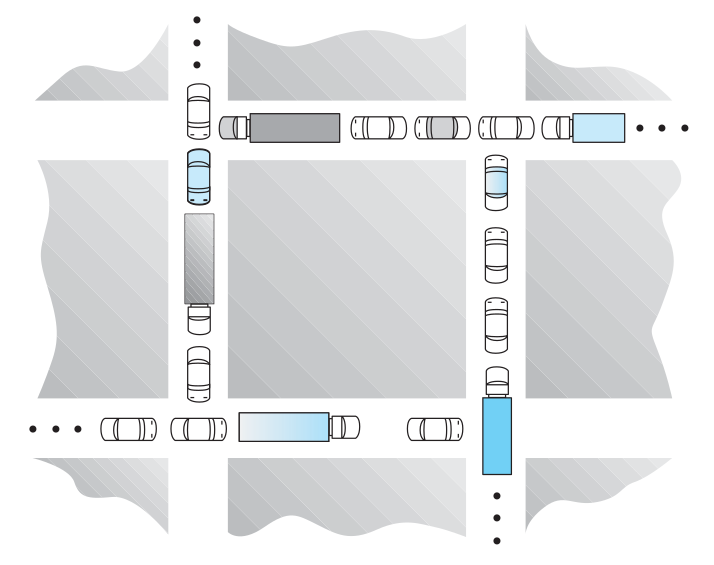

Consider the trafic deadlock depicted the Figure below

-

Show that the four necessary conditions for deadlock hold in this example.

-

State a simple rule for avoiding deadlocks in this system.

-

-

Assume a multithreaded application uses only reader–writer locks for synchronization. Applying the four necessary conditions for deadlock, is deadlock still possible if multiple reader–writer locks are used?

-

The program example shown below doesn’t always lead to deadlock. Describe what role the CPU scheduler plays and how it can contribute to deadlock in this program.

/* thread one runs in this function */

void *do_work_one(void *param) {

pthread_mutex_lock(&first mutex);

pthread_mutex_lock(&second mutex);

/**

* Do some work

*/

pthread_mutex_unlock(&second mutex);

pthread_mutex_unlock(&first mutex);

pthread_exit(0);

}

/* thread two runs in this function */

void *do work two(void *param) {

pthread_mutex_lock(&second mutex);

pthread_mutex_lock(&first mutex);

/**

* Do some work

*/

pthread_mutex_unlock(&first mutex);

pthread_mutex_unlock(&second mutex);

pthread_exit(0);

}

-

Compare the circular-wait scheme with the various deadlock-avoidance schemes (like the banker’s algorithm) with respect to the following issues:

-

Runtime overheads

-

System throughput

-

-

Consider the version of the dining-philosophers problem in which the chopsticks are placed at the center of the table and any two of them can be used by a philosopher. Assume that requests for chopsticks are made one at a time. Describe a simple rule for determining whether a particular request can be satisfied without causing deadlock given the current allocation of chopsticks to philosophers.

-

Consider again the setting in the preceding question. Assume now that each philosopher requires three chopsticks to eat. Resource requests are still issued one at a time. Describe some simple rules for determining whether a particular request can be satisfied without causing deadlock given the current allocation of chopsticks to philosophers.

-

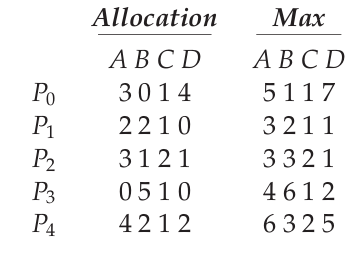

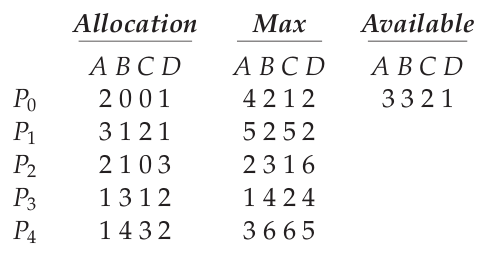

Consider the following snapshot of a system:

Using the banker’s algorithm, determine whether or not each of the following states is unsafe. If the state is safe, illustrate the order in which the processes may complete. Otherwise, illustrate why the state is unsafe.-

Available = (0, 3, 0, 1)

-

Available = (1, 0, 0, 2)

-

-

A single-lane bridge connects the two Vermont villages of North Tunbridge and South Tunbridge. Farmers in the two villages use this bridge to deliver their produce to the neighboring town. The bridge can become deadlocked if both a northbound and a southbound farmer get on the bridge at the same time (Vermont farmers are stubborn and are unable to back up). Using semaphores, design an algorithm that prevents dead- lock. Initially, do not be concerned about starvation (the situation in which northbound farmers prevent southbound farmers from using the bridge, and vice versa).

-

Modify your solution to the previous, so that it is starvation-free.

In class:

Use the first 30 minutes to work on those exercises:

-

In a real computer system, neither the resources available nor the demands of processes for resources are consistent over long periods (months). Resources break or are replaced, new processes come and go, new resources are bought and added to the system. If deadlock is controlled by the banker’s algorithm, which of the following changes can be made safely (without introducing the possibility of deadlock), and under what circumstances?

-

Increase Available (new resources added)

-

Decrease Available (resource permanently removed from system)

-

Increase Max for one process (the process needs or wants more resources than allowed).

-

Decrease Max for one process (the process decides it does not need that many resources)

-

Increase the number of processes

-

Decrease the number of processes

-

-

Consider a system consisting of four resources of the same type that are shared by three processes, each of which needs at most two resources. Show that the system is deadlock-free.

-

Consider a system consisting of m resources of the same type being shared by n processes. A process can request or release only one resource at a time. Show that the system is deadlock free if the following two conditions hold: i.) The maximum need of each process is between 1 and m resources, and ii.) The sum of all maximum needs is less than m + n

-

Consider the following snapshot of a system:

Assume the Available vector being (A,B,C,D)=(3,3,2,1). Answer the following questions using the banker’s algorithm:-

Illustrate that the system is in a safe state by demonstrating an order in which the processes may complete.

-

If a request from process P1 arrives for (1, 1, 0, 0), can the request be granted immediately?

-

If a request from process P4 arrives for (0, 0, 2, 0), can the request be granted immediately?

-

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 8 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 5 and as HTML

Weekly Note 7 - Week 12 - Online from 16 March 2019

Lecture - Tuesday, March 19th.

16-19 in U82

We will cover chapter 9 on main memory, and study how to detect and handle them. This chapter will be the primary material for exam topic 6: "Main Memory".

We will have a small review of the previous lecture, so this time it will be chapters 8, and we probably sneak in a Kahoot.

Tutorial session

Thursday March 21th. 8-10 in U166 or Friday March 22th. 8-10 in U82

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Main Memory"

Prepare at home to discuss:

-

Explain the difference between internal and external fragmentation.

-

Consider the following process for generating binaries. A compiler is used to generate the object code for individual modules, and a linkage editor is used to combine multiple object modules into a single program binary.

-

How does the linkage editor change the binding of instructions and data to memory addresses?

-

What information needs to be passed from the compiler to the linkage editor to facilitate the memory binding tasks of the linkage editor?

-

-

Given five memory partitions of 100 KB, 500 KB, 200 KB, 300 KB, and 600 KB (in order), how would each of the first-fit, best-fit, and worst-fit algorithms place processes of 212 KB, 417 KB, 112 KB, and 426 KB (in order)? Which algorithm makes the most efficient use of memory?

-

Most systems allow programs to allocate more memory to its address space during execution. Data allocated in the heap segments of programs are an example of such allocated memory. What is required to support dynamic memory allocation in the following schemes:

-

contiguous-memory allocation

-

paging

-

-

Compare the main memory organization schemes of contiguous memory allocation, and paging with respect to the following issues:

-

external fragmentation

-

internal fragmentation

-

ability to share code across processes

-

-

On a system with paging, a process cannot access memory that it does not own; why? How could the operating system allow access to other memory? Why should it or should it not?

-

Assuming a 1 KB page size, what are the page numbers and offsets for the following address references (provided as decimal numbers):

-

2375

-

19366

-

30000

-

256

-

16385

-

-

Consider a logical address space of 32 pages with 1024 words per page; mapped onto a physical memory of 16 frames.

-

How many bits are required in the logical address?

-

How many bits are required in the physical address?

-

In class:

Use the first 30 minutes to work on those exercises:

-

Consider a paging system with the page table stored in memory.

-

If a memory reference takes 200 nanoseconds, how long does a paged memory reference take?

-

If we add TLBs, and 75 percent of all page-table references are found in the TLBs, what is the effective memory reference time? (Assume that finding a page-table entry in the TLBs takes zero time, if the entry is there.)

-

-

What is the purpose of paging the page tables?

-

Consider a computer system with a 32-bit logical address and 4-KB page size. The system supports up to 512 MB of physical memory. How many entries are there in each of the following?

-

A conventional single-level page table?

-

An inverted page table?

-

-

Program binaries in many systems are typically structured as follows. Code is stored starting with a small fixed virtual address such as 0. The code segment is followed by the data segment that is used for storing the program variables. When the program starts executing, the stack is allocated at the other end of the virtual address space and is allowed to grow towards lower virtual addresses. What is the significance of the above structure for the following schemes:

-

contiguous-memory allocation

-

paging

-

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 9 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 6 and as HTML

Weekly Note 8 - Week 13 - Online from 22 March 2019

Lecture - Tuesday, March 26th.

16-19 in U82

We will cover chapter 10 on virtual memory. This chapter will be the primary material for exam topic 7: "Virtual Memory".

We will have a small review of the previous lecture, so this time it will be chapters 9, and have the Kahoot as well.

Tutorial session

Friday March 22th. 10-12 in U166

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Virtual Memory"

Prepare at home to discuss:

-

Assume a program has just referenced an address in virtual memory. Describe a scenario how each of the following can occur: (If a scenario cannot occur, explain why.)

-

TLB miss with no page fault

-

TLB miss and page fault

-

TLB hit and no page fault

-

TLB hit and page fault

-

-

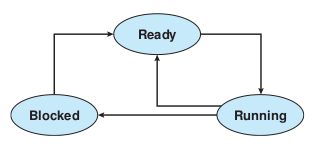

A simplified view of thread states is Ready, Running, and Blocked, where a thread is either ready and waiting to be scheduled, is running on the processor, or is blocked (for example, waiting for I/O). This is illustrated in the figure below. Assuming a thread is in the Running state, answer the following questions, and explain your answer:

-

Will the thread change state if it incurs a page fault? If so, to what new state?

-

Will the thread change state if it generates a TLB miss that is resolved in the page table? If so, to what new state?

-

Will the thread change state if an address reference is resolved in the page table? If so, to what new state? Consider a system that uses pure demand paging:

-

-

When a process first starts execution, how would you characterize the page fault rate?

-

Once the working set for a process is loaded into memory, how would you characterize the page fault rate?

-

Assume a process changes its locality and the size of the new working set is too large to be stored into available free memory. Identify some options system designers could choose from to handle this situation?

-

-

What is the copy-on-write feature, and under what circumstances is it beneficial? What hardware support is required to implement this feature?

-

Consider the following page reference string:

7, 2, 3, 1, 2, 5, 3, 4, 6, 7, 7, 1, 0, 5, 4, 6, 2, 3, 0 , 1. Assuming demand paging with three frames, how many page faults would occur using-

LRU replacement

-

FIFO replacement

-

Optimal replacement

-

-

Assume that you are monitoring the rate at which the pointer in the clock algorithm (which indicates the candidate page for replacement) moves. What can you say about the system if you notice the following behavior:

-

pointer is moving fast

-

pointer is moving slow

-

-

Discuss situations in which the LFU page-replacement algorithm generates fewer page faults than the LRU page-replacement algorithm. Also discuss under what circumstances the opposite holds.

-

Discuss situations in which the MFU page-replacement algorithm generates fewer page faults than the LRU page-replacement algorithm. Also discuss under what circumstances the opposite holds

-

Consider a demand-paging system with the following time-measured utilizations:

-

CPU utilization: 20%

-

Paging disk: 97.7%

-

Other I/O devices: 5%

For each of the following, say whether it will (or is likely to) improve CPU utilization. Explain your answers.-

Install a faster CPU

-

Install a bigger paging disk

-

Increase the degree of multiprogramming.

-

Decrease the degree of multiprogramming.

-

Install more main memory.

-

Install a faster hard disk or multiple controllers with multiple hard disks.

-

Add prepaging to the page fetch algorithms.

-

Increase the page size.

-

-

-

What is the cause of thrashing? How does the system detect thrashing? Once it detects thrashing, what can the system do to eliminate this problem?

In class:

Use the first 30 minutes to work on those exercises:

-

Suppose that your replacement policy (in a paged system) is to examine each page regularly and to discard that page if it has not been used since the last examination. What would you gain and what would you lose by using this policy rather than LRU or second-chance replacement?

-

Consider a demand-paging system with a paging disk that has an average access and transfer time of 20 milliseconds. Addresses are translated through a page table in main memory, with an access time of 1 microsecond per memory access. Thus, each memory reference through the page table takes two accesses. To improve this time, we have added an associative memory that reduces access time to one memory reference, if the page-table entry is in the associative memory. Assume that 80 percent of the accesses are in the associative memory and that, of those remaining, 10 percent (or 2 percent of the total) cause page faults. What is the effective memory access time?

-

Assume we have a demand-paged memory. The page table is held in registers. It takes 8 milliseconds to service a page fault if an empty page is available or the replaced page is not modified, and 20 milliseconds if the replaced page is modified. Memory access time is 100 nanoseconds. Assume that the page to be replaced is modified 70 percent of the time. What is the maximum acceptable page-fault rate for an effective access time of no more than 200 nanoseconds?

-

The slab allocation algorithm uses a separate cache for each different object type. Assuming there is one cache per object type, explain why this doesn’t scale well with multiple CPUs. What could be done to address this scalability issue?

-

Consider a system that allocates pages of different sizes to its processes. What are the advantages of such a paging scheme? What modifications to the virtual memory system provide this functionality?

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 10 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 7 and as HTML

Weekly Note 9 - Week 14 - Online from 31 March 2019

Lecture - Tuesday, April 2nd.

16-19 in U82

We will cover chapter 11 on mass-storage structure and chapter 12 on I/Osystems. This will be the primary material for exam topic 8: "Mass-Storage Structure and I/O Systems".

We will have a small review of the previous lecture, so this time it will be chapters 10, and have the Kahoot as well.

Tutorial session

Friday April 5th. 12-14 in U166 This week there is again only one session.

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Mass-Storage Structure and I/O Systems"

Prepare at home to discuss:

-

None of the disk-scheduling disciplines, except FCFS, is truly fair (starvation may occur).

-

Explain why this assertion is true.

-

Describe a way to modify algorithms such as SCAN to ensure fairness.

-

Explain why fairness is an important goal in a time-sharing system.

-

Give three or more examples of circumstances in which it is important that the operating system be unfair in serving I/O requests.

-

-

Explain why SSDs often use a FCFS disk scheduling algorithm.

-

Suppose that a disk drive has 5000 cylinders, numbered 0 to 4999. The drive is currently serving a request at cylinder 2150, and the previous request was at cylinder 1805. The queue of pending requests, in FIFO order, is:

2069, 1212, 2296, 2800, 544, 1618, 356, 1523, 4965, 3681. Starting from the current head position, what is the total distance (in cylinders) that the disk arm moves to satisfy all the pending requests for each of the following disk-scheduling algorithms?-

FCFS

-

SCAN

-

C-SCAN

-

-

Describe some advantages and disadvantages of using SSDs as a caching tier and as a disk drive replacement compared to a system with just magnetic disks.

-

Consider a RAID Level 5 organization comprising five disks, with the parity for sets of four blocks on four disks stored on the fifth disk. How many blocks are accessed in order to perform the following?

-

A write of one block of data

-

A write of seven continuous blocks of data

-

-

Compare the throughput achieved by a RAID Level 5 organization with that achieved by a RAID Level 1 organization for the following:

-

Read operations on single blocks

-

Read operations on multiple contiguous blocks

-

-

Assume that you have a mixed configuration comprising disks organized as RAID Level 1 and as RAID Level 5 disks. Assume that the system has exibility in deciding which disk organization to use for storing a particular file. Which files should be stored in the RAID Level 1 disks and which in the RAID Level 5 disks in order to optimize performance?

-

Discuss the relative advantages and disadvantages of sector sparing and sector slipping.

-

When multiple interrupts from different devices appear at about the same time, a priority scheme could be used to determine the order in which the interrupts would be serviced. Discuss what issues need to be considered in assigning priorities to different interrupts.

-

What are the advantages and disadvantages of supporting memory-mapped I/O to device-control registers?

-

In most multiprogrammed systems, user programs access memory through virtual addresses, while the operating system uses raw physical addresses to access memory. What are the implications of this design on the initiation of I/O operations by the user program and their execution by the operating system?

In class:

Use the first 30 minutes to work on those exercises:

-

What are the various kinds of performance overheads associated with servicing an interrupt?

-

Describe three circumstances under which blocking I/O should be used. Describe three circumstances under which non-blocking I/O should be used. Why not just implement non-blocking I/O and have processes busy-wait until their device is ready?

-

The reliability of a hard-disk drive is typically described in terms of a quantity called mean time between failures (MTBF). Although this quantity is called a "time", the MTBF actually is measured in drivehours per failure.

-

If a system contains 1000 drives, each of which has a 750,000-hour MTBF, which of the following best describes how often a drive failure will occur in that disk farm: once per thousand years, once per century, once per decade, once per year, once per month, once per week, once per day, once per hour, once per minute, or once per second?

-

Mortality statistics indicate that, on the average, a U.S. resident has about 1 chance in 1000 of dying between ages 20 and 21 years. Deduce the MTBF hours for 20 year olds. Convert this figure from hours to years. What does this MTBF tell you about the expected lifetime of a 20 year old?

-

The manufacturer guarantees a 1-million-hour MTBF for a certain model of disk drive. What can you conclude about the number of years for which one of these drives is under warranty?

-

-

Typically, at the completion of a device I/O, a single interrupt is raised and appropriately handled by the host processor. In certain settings, however, the code that is to be executed at the completion of the I/O can be broken into two separate pieces. The first piece executes immediately after the I/O completes and schedules a second interrupt for the remaining piece of code to be executed at a later time. What is the purpose of using this strategy in the design of interrupt handlers?

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 11 and 12 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 8 and as HTML

Weekly Note 10 - Week 15 - Online from 04 April 2019

Lecture - Tuesday, April 9nd.

16-19 in U82

This week we will cover the most relevant parts of the three chapters that relate to file systems: 13 - File system interface, 14 - File System Implementation and 15 - File system internals.

We will have a small review of the previous lecture, so this time it will be chapters 11 and 12, and have the Kahoot as well.

Tutorial session

Thursday April 11th. 08-10 or 12-14 in U166.

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "File-System and Implementing File-Systems"

Prepare at home to discuss:

-

Consider a file system where a file can be deleted and its disk space Reclaimed while links to that file still exist. What problems may occur if a new file is created in the same storage area or with the same absolute path name? How can these problems be avoided?

-

The open-file table is used to maintain information about files that are currently open. Should the operating system maintain a separate table for each user or just maintain one table that contains references to files that are being accessed by all users at the current time? If the same file is being accessed by two different programs or users, should there be separate entries in the open file table?

-

What are the advantages and disadvantages of a system providing mandatory locks instead of providing advisory locks whose usage is left to the users' discretion?

-

Provide examples of applications that typically access files according to the following methods: i.) Sequential, ii.) Random

-

Some systems automatically open a file when it is referenced for the first time, and close the file when the job terminates. Discuss the advantages and disadvantages of this scheme as compared to the more traditional one, where the user has to open and close the file explicitly.

-

If the operating system were to know that a certain application is going to access the file data in a sequential manner, how could it exploit this information to improve performance?

-

Give an example of an application that could benefit from operating system support for random access to indexed files.

-

Contrast the performance of the three techniques for allocating disk blocks (contiguous, linked, and indexed) for both sequential and random file access.

-

Consider a system where free space is kept in a free-space list. Suppose that the pointer to the free-space list is lost. Can the system reconstruct the free-space list? Explain your answer. Consider a file system similar to the one used by UNIX with indexed allocation. How many disk I/O operations might be required to read the contents of a small local file at /a/b/c? Assume that none of the disk blocks is currently being cached. Suggest a scheme to ensure that the pointer is never lost as a result of memory failure.

-

Some file systems allow disk storage to be allocated at different levels of granularity. For instance, a file system could allocate 4 KB of disk space as a single 4-KB block or as eight 512-byte blocks. How could we take advantage of this exibility to improve performance? What modifications would have to be made to the freespace management scheme in order to support this feature?

-

Consider a file system on a disk that has both logical and physical block sizes of 512 bytes. Assume that the information about each file is already in memory. For each of the three allocation strategies (contiguous, linked, and indexed), answer these questions: How is the logical-to-physical address mapping accomplished in this system? (For the indexed allocation, assume that a file is always less than 512 blocks long.) If we are currently at logical block 10 (the last block accessed was block 10) and want to access logical block 4, how many physical blocks must be read from the disk?

-

Fragmentation on a storage device could be eliminated by recompaction of the information. Typical disk devices do not have relocation or base registers (such as are used when memory is to be compacted), so how can we relocate files? Give three reasons why recompacting and relocation of files often are avoided.

-

Explain why logging metadata updates ensures recovery of a file system after a file system crash.

In class:

Use the first 30 minutes to work on those exercises:

-

Discuss the advantages and disadvantages of supporting links to files that cross mount points (that is, the file link refers to a file that is stored in a different volume).

-

Some systems provide file sharing by maintaining a single copy of a file; other systems maintain several copies, one for each of the users sharing the file. Discuss the relative merits of each approach.

-

Discuss the advantages and disadvantages of associating with remote file systems (stored on file servers) a different set of failure semantics from that associated with local file systems.

-

What are the advantages of the variation of linked allocation that uses a FAT to chain together the blocks of a file?

-

Discuss how performance optimizations for file systems might result in difficulties in maintaining the consistency of the systems in the event of computer crashes.

-

Assume that in a particular augmentation of a remote-file-access protocol, each client maintains a name cache that caches translations from file names to corresponding file handles. What issues should we take into account in implementing the name cache?

Then spend the remaining time discussing your list of keywords, and the excercises prepared at home and in class.

Recommended Reading

-

Chapter 13, 14 and 15 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 9 and as HTML

Weekly Note 11 - Week 17 - Online from 14 April 2019

Lecture - Tuesday, April 23rd.

16-19 in U82

This week we will cover Protection, as this is the smallest of the remaining topics, and this will leave us time to have code review of project 3.

We will also have a small review of the previous lecture, so this time it will be filesystems, and have the Kahoot as well.

Tutorial session

Thursday April 25th. 08-10 or 12-14 in U166.

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Protection"

Prepare at home to discuss:

-

What are the main differences between capability lists and access lists?

-

The access-control matrix could be used to determine whether a process can switch from, say, domain A to domain B and enjoy the access privileges of domain B. Is this approach equivalent to including the access privileges of domain B in those of domain A?

-

Discuss the strengths and weaknesses of implementing an access matrix using access lists that are associated with objects.

-

Discuss the strengths and weaknesses of implementing an access matrix using capabilities that are associated with domains.

-

What is the need-to-know principle? Why is it important for a protection system to adhere to this principle?

-

How are the access-matrix facility and the role-based access-control facility similar? How do they differ?

-

How does the principle of least privilege aid in the creation of protection systems?

-

Why is it difficult to protect a system in which users are allowed to do their own I/O? .

In class:

Use the first 45 minutes to discuss the exercises prepared at home and your list of keywords.

Use the last 45 minutes to discuss project 4. You can discuss with the TA any design choices you have considered, and get his opinion on the solution and its complexity. It is up to you to not overcomplicate project 4, and the design of your filesystem is a big factor in this.

Recommended Reading

-

Chapter 17 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 10 and as HTML

Weekly Note 12 - Week 18 - Online from 27 April 2019

This week you should have extra time to work on the last project.

As the deadline for the last project is very close to the deadline for handing in the protocol, there is no guarantee that resubmissions will be possible before the ordinary exam. Thus the resubmission will likely be for the re-exam, so turn in project 4 (even if you are not completely done).

Lecture

No lecture this week.

Tutorial session

No tutorial session this week

Weekly Note 13 - Week 19 - Online from 30 April 2019

Lecture - Tuesday, May 7th.

16-19 in U82

This week we will cover the topic of Security, thus chapter 16 in the textbook.

We will have a discussion on how the exam is held, and you can signup for a preferred day and order for the exam.

We will also have a small review of the previous lecture, so this time it will be protection, and have the Kahoot as well.

Tutorial session

Thursday May 9th. 08-10 in U166 or 08-10 in U166.

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Security"

Prepare at home to discuss:

-

Buffer-overflow attacks can be avoided by adopting a better programming methodology or by using special hardware support. Discuss these solutions.

-

A password may become known to other users in a variety of ways. Is there a simple method for detecting that such an event has occurred? Explain your answer.

-

What is the purpose of using a "salt" along with the user-provided password? Where should the "salt" be stored, and how should it be used?

-

The list of all passwords is kept within the operating system. Thus, if a user manages to read this list, password protection is no longer provided. Suggest a scheme that will avoid this problem. (Hint: Use different internal and external representations.)

-

Make a list of six security concerns for a bank’s computer system. For each item on your list, state whether this concern relates to physical, human, or operating-system security.

-

What are two advantages of encrypting data stored in the computer system?

-

Compare symmetric and asymmetric encryption schemes, and discuss under what circumstances a distributed system would use one or the other.

-

An experimental addition to UNIX allows a user to connect a watchdog program to a file. The watchdog is invoked whenever a program requests access to the file. The watchdog then either grants or denies access to the file. Discuss pros and cons of using watchdogs for security.

-

What commonly used computer programs are prone to man-in-the-middle attacks? Discuss solutions for preventing this form of attack.

-

Discuss how the asymmetric encryption algorithm can be used to achieve the following goals.

-

Authentication: the receiver knows that only the sender could have generated the message.

-

Secrecy: only the receiver can decrypt the message.

-

Authentication and secrecy: only the receiver can decrypt the message, and the receiver knows that only the sender could have generated the message.

-

In class:

Use the first 45 minutes to discuss the exercises prepared at home and your list of keywords.

The last 45 minutes, Jørn will give an introduction to Sing OS and the challenges found when implementing an operating system from scratch.

Recommended Reading

-

Chapter 16 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 11 and as HTML

Weekly Note 14 - Week 20 - Online from 30 April 2019

The exam questions and procedure can be found in the news section!

Lecture - Tuesday, May 14th.

16-19 in U82

This is the last lecture in the course, and we will cover the chapter on Virtual Machines.

We will have a code-review session on code found in project 4.

Finally, we will have a small review of the previous lecture, i.e. security, and have the Kahoot as well.

Tutorial session

This week there is only one session: Wednesday May 15th. 12-14 in U30.

Preparation:

Make a list of 10-15 keywords for a 10 min. presentation with the topic: "Virtual Machines"

Prepare at home to discuss:

-

Describe the three types of traditional hypervisors.

-

Describe four virtualization-like execution environments, and explain how they differ from “true” virtualization.

-

Describe four benefits of virtualization.

-

Why can VMM s not implement trap-and-emulate-based virtualization on some CPUs? Lacking the ability to trap-and-emulate, what method can a VMM use to implement virtualization?

-

What hardware assistance for virtualization can be provided by modern CPUs?

-

Why is live migration possible in virtual environments but much less possible for a native operating system?

In class:

Use the first 45 minutes to discuss the exercises prepared at home and your list of keywords.

The last 45 minutes, Jørn will give an introduction to Qubes OS that focusses on security and virtualization.

Recommended Reading

-

Chapter 18 in Operating System Concepts, Enhanced eText, 10th Edition

Material (Slides, etc.)

-

Slides for lecture 12 and as HTML

Projects

There will be a 4 projects in the course.

They will be independent, and the projects are a requirement for being eligible for the exam.

- Project 1 - Cycle Detection. Deadline: February 16th.

- Project 2 - System Call in UML. Deadline: March 9th.

- Project 3 - Kernel Module in UML. Deadline: April 14th.

- Project 4 - Log-Structured File Systems with FUSE. Deadline: May 10th.

Project 1

Project 2

Project 3

Project 4

Basic Info

This is the homepage for the course "Operating SYstems" a 10 ECTS course with a programming projects for students from IMADA

The lecturer is and Jacob Aae Mikkelsen (jamik@imada.sdu.dk)

The course is taught in english, if international students will be participating.

Schedule

Should follow roughly mitsdu.sdu.dk/skemaThe lectures is scheduled late in the day, as the lecturer is external, and usually not available during business hours.

If you have a need for a meeting, feel free to contact me, but expect it will be slotted around 16 in Vidensbyen/Cortex Park.

If you count the number of lectures (and tutorials) in mitsdu, there are scheduled too many. These will be cancelled at a later time, in case the lectur becomes unavailable due to conditions at work.

If you are in doubt, the weekly notes will tell you the truth. They are likely the most up-to-date information.

Literature

The course textbook will be:

Operating System Concepts, Enhanced eText, 10th EditionAbraham Silberschatz, Greg Gagne, Peter B. Galvin.

ISBN: 978-1119456339

We might use material from other sources, which will be announced here on the web page.